OPA Gatekeeper in the Admission Controllers' World

A couple of weeks ago, while surfing the Cloud Native Computing Foundation (CNCF) website, I stumbled upon one of its graduate projects - Open Policy Agent (OPA). After reading some documentation on OPA’s website, I got interested and decided to explore this open-source project.

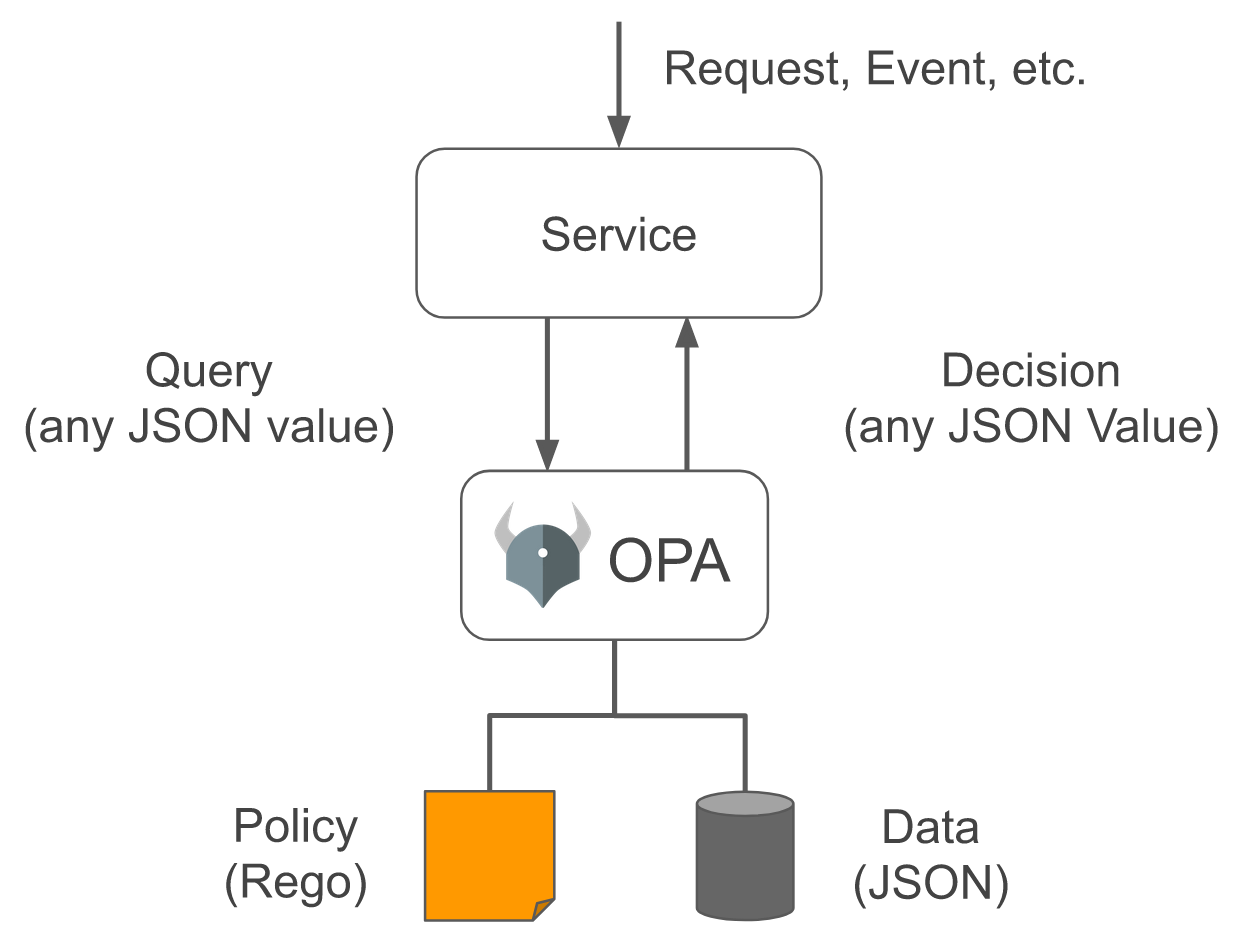

OPA consists of a general-purpose policy engine. The main goal is to make decisions based on Input, Policies (written in Rego), and Data while decoupling the logic from the services' code.

There are many use-cases for OPA. Since I’ve been investing in learning more about Kubernetes, I opted for a project that integrates OPA and Kubernetes - Gatekeeper.

Kubernetes Admission Controllers#

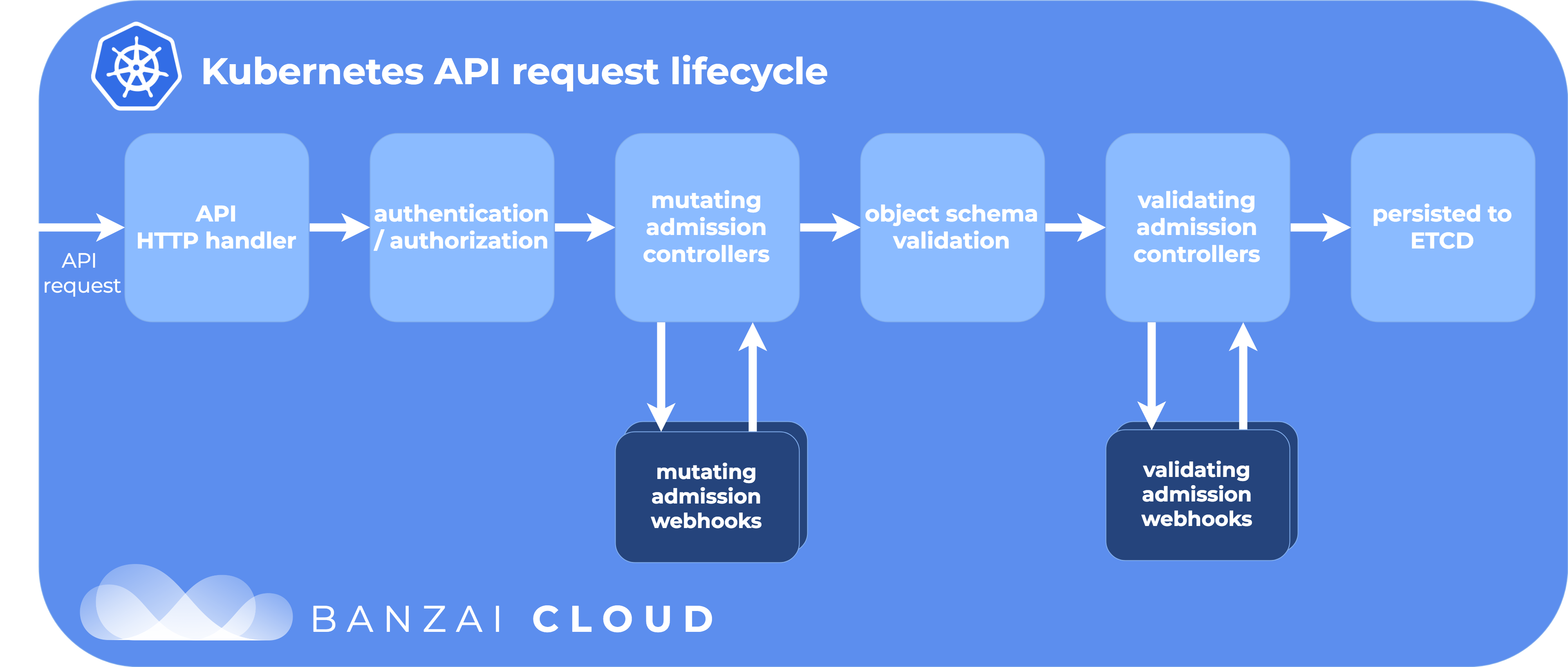

Admission Controllers intercept and process requests made to the Kubernetes API. This means that if a request is denied, it’s not persisted in etcd nor executed.

Some well-known admission controllers are ResourceQuota, LimitRanger, NamespaceLifecycle, etc. They can be enabled with the enable-admission-plugins flag in the kube-apiserver.

There are, however, two special admission controllers: MutatingAdmissionWebhook and ValidatingAdmissionWebhook. These controllers allow the extension of Kubernetes API functionality via webhooks.

Admission Controllers can be classified as “mutating”, “validating” or both. Mutating controllers may modify the objects they admit. Validating controllers return a binary response - yes or no - according to the object contents.

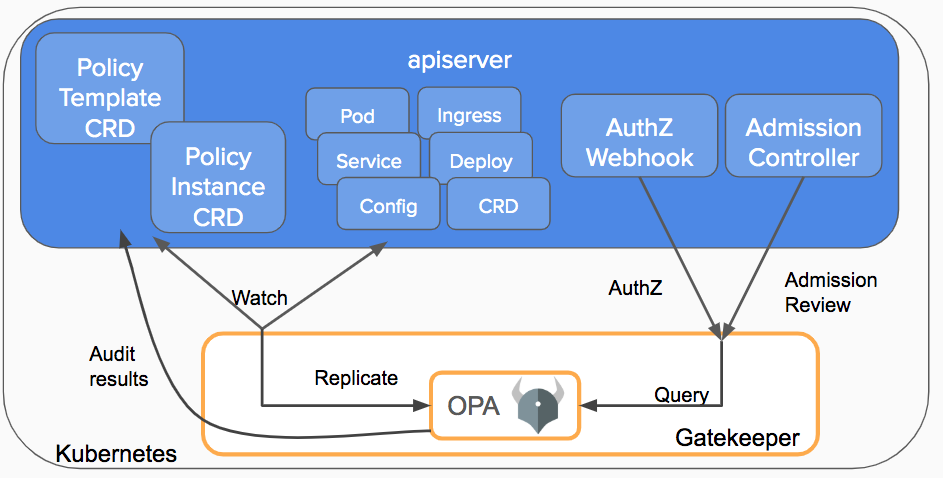

OPA Gatekeeper#

Gatekeeper v3.0 allows the creation of policies based on Custom Resource Definitions (CRDs). It provides validating and mutating admission control through a customizable admission webhook.

Hands-on Example#

Let’s imagine that you want to enforce the following policy:

Pods can only be launched in a Namespace with a ResourceQuota defined.

How can we enforce it in our Kubernetes cluster? In this hands-on example, we will go through it step by step. You can find the source code in my Gatekeeper GitHub repository.

Bootstrap#

Create Kubernetes cluster with one admission plugin only:

minikube start --extra-config=apiserver.enable-admission-plugins=NodeRestriction

Install Gatekeeper with Helm (or other options):

helm repo add gatekeeper https://open-policy-agent.github.io/gatekeeper/charts # first time only

helm install gatekeeper gatekeeper/gatekeeper -n gatekeeper-system --create-namespace

Create And Test Policy#

In this example, we will need to sync some data to OPA. It’s not possible to know if a Namespace has a ResourceQuota without having access to all ResourceQuotas. The sync is possible with the Config resource:

# sync.yaml

apiVersion: config.gatekeeper.sh/v1alpha1

kind: Config

metadata:

name: config

namespace: gatekeeper-system

spec:

sync:

syncOnly:

- group: ""

version: "v1"

kind: "ResourceQuota"

kubectl apply -f sync.yaml

Then, we will create a ConstraintTemplate1 that contains the Rego policy:

# template.yaml

apiVersion: templates.gatekeeper.sh/v1beta1

kind: ConstraintTemplate

metadata:

name: k8srequiredresourcequota

annotations:

description: Requires Pods to launch in Namespaces with ResourceQuotas.

spec:

crd:

spec:

names:

kind: K8sRequiredResourceQuota

targets:

- target: admission.k8s.gatekeeper.sh

rego: |

package k8srequiredresourcequota

violation[{"msg": msg}] {

input.review.object.kind == "Pod"

requestns := input.review.object.metadata.namespace

not data.inventory.namespace[requestns]["v1"]["ResourceQuota"]

msg := sprintf("container <%v> could not be created because the <%v> namespace does not have ResourceQuotas defined", [input.review.object.metadata.name,input.review.object.metadata.namespace])

}

kubectl apply -f template.yaml

Finally, we create the Constraint, based on the previous ConstraintTemplate:

# constraint.yaml

apiVersion: constraints.gatekeeper.sh/v1beta1

kind: K8sRequiredResourceQuota

metadata:

name: namespace-must-have-resourcequota

spec:

match:

kinds:

- apiGroups: [""]

kinds: ["Pod"]

excludedNamespaces:

- gatekeeper-system

kubectl apply -f constraint.yaml

We are ready to test it!

To start, we will create a Namespace without a ResourceQuota and try to launch a Pod in it:

# ns-without-quota.yaml

apiVersion: v1

kind: Namespace

metadata:

name: unbounded-namespace

# blocked-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: blocked-pod

namespace: unbounded-namespace

spec:

containers:

- image: nginx

name: blocked-pod

kubectl apply -f ns-without-quota.yaml

kubectl apply -f blocked-pod.yaml # Should give Error

The second kubectl apply should give an error (Error from server). If it does, our desired policy is being enforced as it should.

Now let’s try to launch a Pod in a Namespace with a ResourceQuota associated:

# ns-with-quota.yaml

apiVersion: v1

kind: Namespace

metadata:

name: bounded-namespace

---

apiVersion: v1

kind: ResourceQuota

metadata:

name: bounded-namespace-quota

namespace: bounded-namespace

spec:

hard:

requests.cpu: 1

requests.memory: 1Gi

# allowed-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: allowed-pod

namespace: bounded-namespace

spec:

containers:

- image: nginx

name: allowed-pod

resources:

requests:

cpu: 0.5

memory: 500Mi

kubectl apply -f ns-with-quota.yaml

kubectl apply -f allowed-pod.yaml # Should work

This time the Pod should be successfully launched since we are not violating the namespace-must-have-resourcequota Constraint.

What about previous Pods that were launched before the Constraint was created? Gatekeeper has an Audit functionality that allows you to see violations.

For example:

kubectl describe K8sRequiredResourceQuota namespace-must-have-resourcequota

Name: namespace-must-have-resourcequota

Namespace:

Labels: <none>

Annotations: <none>

API Version: constraints.gatekeeper.sh/v1beta1

Kind: K8sRequiredResourceQuota

Metadata:

(...)

Spec:

Match:

Excluded Namespaces:

gatekeeper-system

Kinds:

API Groups:

Kinds:

Pod

Status:

(...)

Total Violations: 7

Violations:

Enforcement Action: deny

Kind: Pod

Message: container <coredns-78fcd69978-7vzw4> could not be created because the <kube-system> namespace does not have ResourceQuotas defined

Name: coredns-78fcd69978-7vzw4

Namespace: kube-system

Enforcement Action: deny

Kind: Pod

Message: container <etcd-minikube> could not be created because the <kube-system> namespace does not have ResourceQuotas defined

Name: etcd-minikube

Namespace: kube-system

(...)

Events: <none>

In this case, we can see seven violations in the kube-system Namespace that we did not exclude in our Contraint.

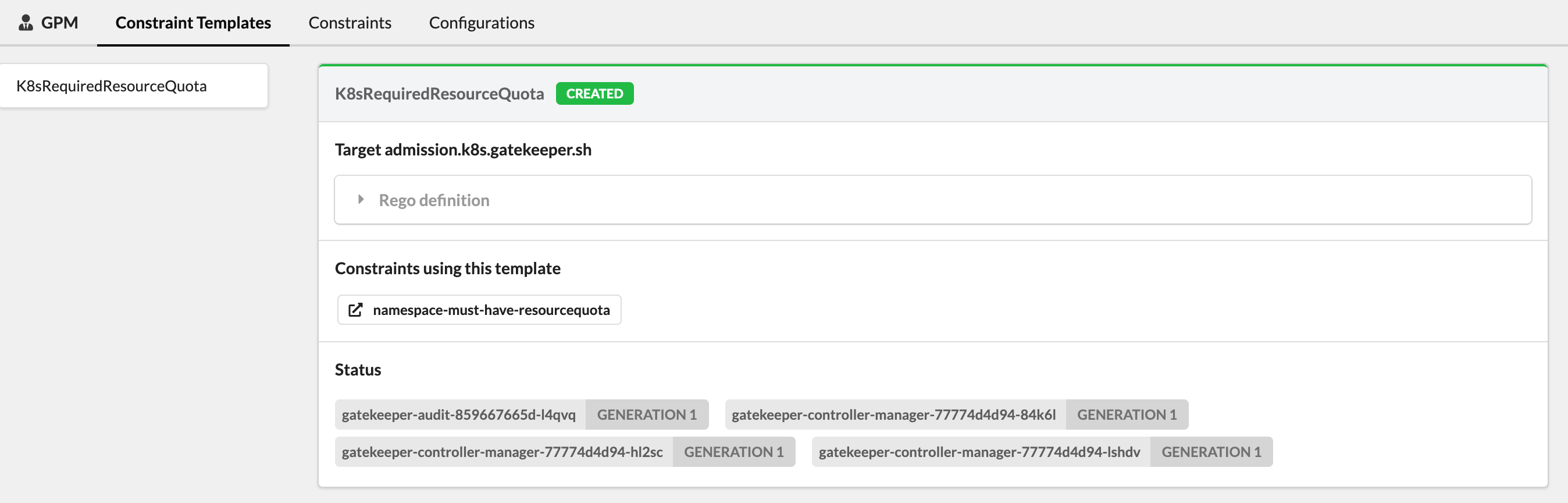

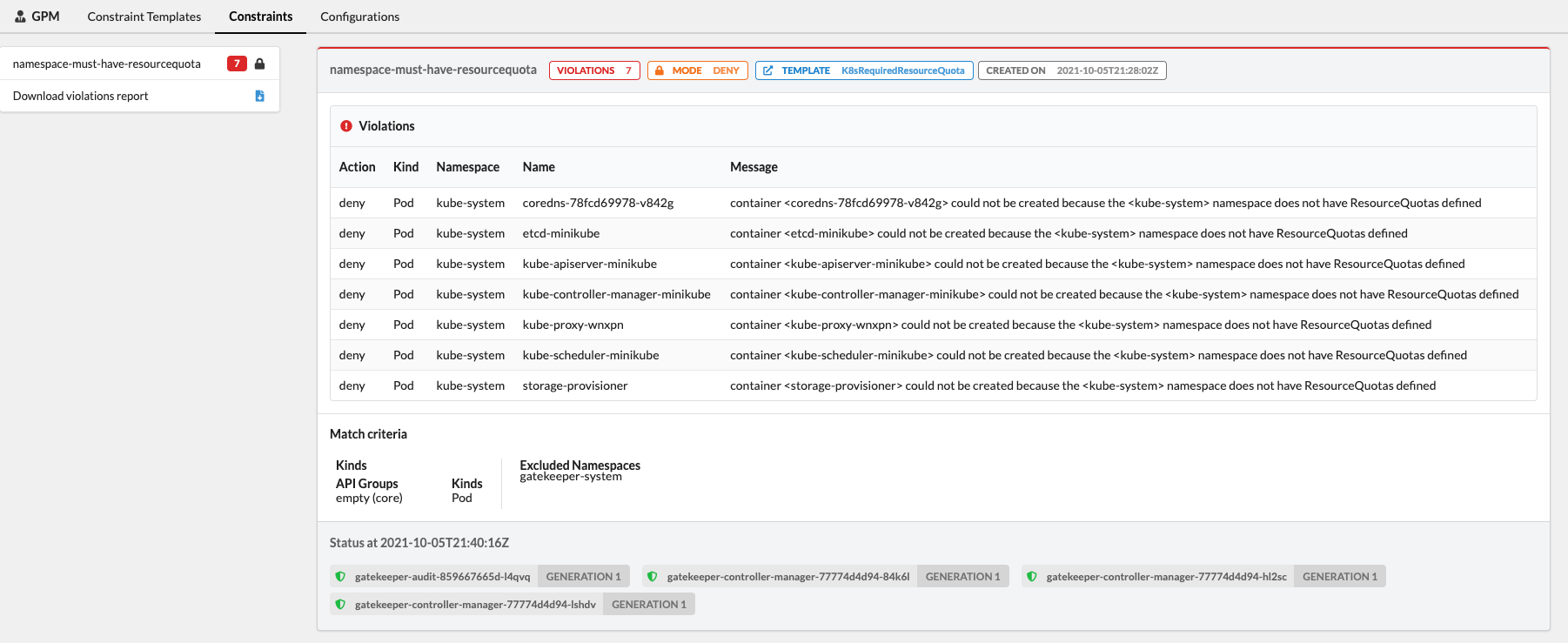

Bonus: Gatekeeper Policy Manager#

There is an interesting read-only web UI called Gatekeeper Policy Manager (GPM). It’s possible to run locally in a container or deploy in the cluster with Kustomize or Helm:

git clone https://github.com/sighupio/gatekeeper-policy-manager.git

helm install gpm gatekeeper-policy-manager/chart --namespace gatekeeper-system --set config.secretKey=dummy

The result:

Cleanup#

helm list -A # list helm releases

helm uninstall gpm -n gatekeeper-system

helm uninstall gatekeeper -n gatekeeper-system

minikube delete

Alternatives#

Some alternatives to Gatekeeper that I did not explore but also look promising:

- Kyverno (CNCF Sandbox Project)

- Kubewarden (SUSE Rancher)

Final Remarks#

OPA and Gatekeeper can be a good last line of defense by enforcing policies before anything is persisted. The Audit functionality can also be useful to detect existing violations that would otherwise be hard to find.

Go ahead and explore the OPA Gatekeeper Library GitHub Repository that contains examples that can serve as inspiration.

-

If you want to learn more about the fields of a ConstraintTemplate, check the OPA Constraint Framework. ↩︎